Decision Intelligence and the Evolution of Project Controls

Are the decisions shaping delivery as robust as the systems designed to monitor it? Decision Intelligence is a discipline concerned with improving the quality of consequential decisions, not by adding more modelling, but by being more deliberate about what decisions depend on and how robust those dependencies are.

Introduction

This article explores a question that sits beneath the surface of most major infrastructure programmes:

Are the decisions shaping delivery as robust as the systems designed to monitor it?

It begins with the familiar discipline of programme setup: structured, well-governed, and carefully estimated, before tracing the pattern by which that discipline can quietly erode under the weight of assumptions that were reasonable at the outset but were never designed to be tested.

It examines where performance pressure really originates, why conventional risk management has limits that are rarely acknowledged, and how the decisions embedded in early commitments function like load-bearing structures long after the scaffolding has come down.

The central argument is that project controls data (the information programmes already generate) contains more strategic intelligence than it is typically asked to provide. Decision Intelligence is presented not as a replacement for existing disciplines, but as a way of using their outputs more deliberately: connecting variance back to the decisions that shaped it, and creating the conditions for earlier, better-informed adaptation.

Readers familiar with programme controls and risk management will, hopefully, find their own experience reflected here, and leave with a sharper sense of the questions worth asking before re-baselining becomes the only available response.

A Structured Beginning and an Unstructured Reality

Most major infrastructure programmes begin with discipline and intent. A mandate is established, a business case is prepared, schedules are modelled, cost estimates are built and risks are identified. Quantitative risk analysis is undertaken. Optimism bias adjustments are applied where required. Project controls teams integrate scope, cost and schedule into coherent baselines. Governance forums are defined. Assurance processes are agreed.

At the outset, the programme feels engineered.

But infrastructure does not unfold in a controlled laboratory. Over the life of a major programme, regulatory interpretations evolve, political priorities shift, market capacity tightens. Contractors merge or withdraw. Inflation behaves differently from forecast. Technology adoption accelerates or stalls. Public tolerance for disruption changes.

None of this is exceptional. Over a ten-year horizon, structural change is more probable than stability.

The real question is not whether change occurs, but whether early decisions were designed with sufficient resilience to absorb it. Designing for uncertainty is not pessimism. It is recognition that long-duration capital programmes rarely operate in stable environments and that early decisions framed only for efficiency under a central case are, in effect, optimised for conditions that are unlikely to persist.

Where Performance Pressure Really Emerges

When programmes come under pressure they experience rising costs, schedule slippage, eroding contingency and strained supplier relationships. These visible symptoms tend to appear first in the controls environment. Cost Performance Index trends deteriorate. Schedule float compresses. Risk exposure begins to outpace mitigation capacity. Forecast at Completion moves incrementally, then materially. Re-baselining discussions begin.

There is usually a rational narrative: scope growth, productivity shortfalls, underestimated risk, inflationary pressure. And these explanations are rarely wrong. But they are often incomplete.

Repeated re-baselining cycles frequently signal something deeper. A procurement model may have assumed sustained bidder appetite in a market that later consolidated. A risk transfer strategy may have assumed contractors could price uncertainty that later proved unmanageable. A productivity assumption embedded in early estimates may have reflected reference projects operating under different regulatory or labour conditions. A demand model may have underestimated behavioural elasticity in response to technology or policy shifts.

At the time, these assumptions were supported by analysis. Risk modelling may have been undertaken using Monte Carlo simulation. External assurance may have confirmed the reasonableness of the baseline. Yet optimism bias does not always manifest as overt overconfidence. It often appears as structural alignment around a central case that feels credible, but proves narrow once the environment shifts.

Project controls teams frequently see the consequences first. Contingency begins to draw down earlier and faster than planned. Forecast reliability declines. Re-baselining becomes a recurring governance event rather than an exception. By then, however, the structural commitments shaping cost and schedule trajectories are already embedded.

When re-baselining becomes routine, controls data should not only trigger corrective action but should prompt reflection on whether the original framing remains appropriate.

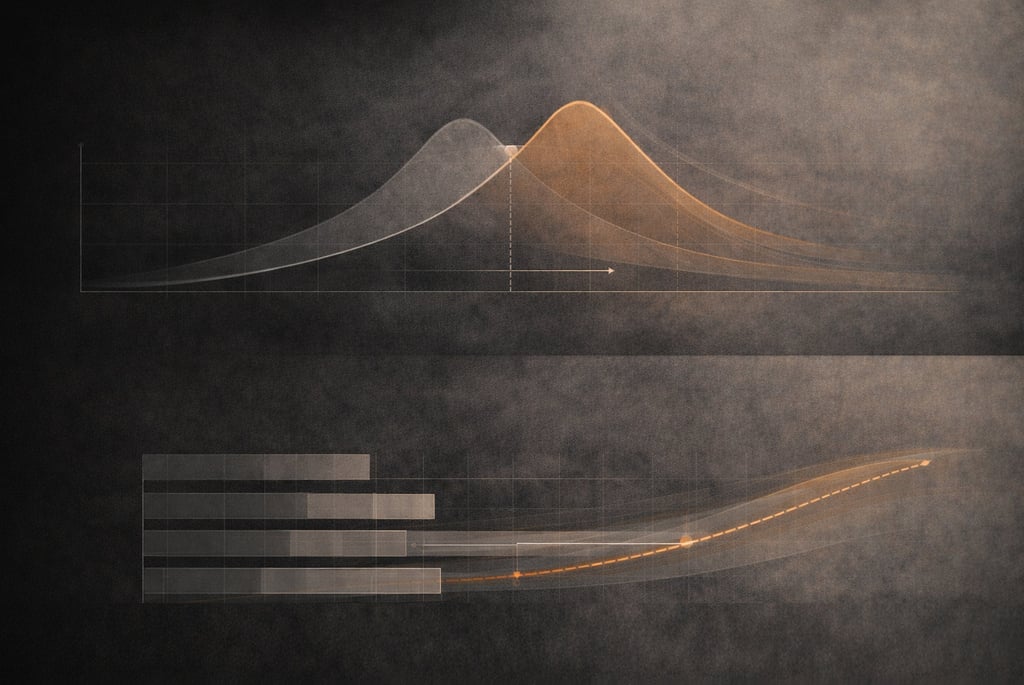

The Limits of the Central Case

Risk management provides essential discipline. It forces programmes to identify threats, quantify potential impact and allocate contingency against defined exposures. But most quantitative risk processes operate within a broadly agreed model of the future. They test variability around a baseline, not the validity of the baseline itself.

A Quantitative Risk Analysis may model cost and schedule variance within assumed ranges of productivity, inflation or scope maturity. What is less commonly tested is whether the structural assumptions underpinning the model remain credible over time:

If inflation remains elevated for five years rather than reverting to historical norms, does the funding model remain viable?

If contractor balance sheets weaken across the market, does the risk allocation model still function as designed?

If regulatory approval processes lengthen systematically, does the phasing logic hold?

These are not risks in the traditional register sense. They are shifts in the operating context and they sit outside the boundary of what most risk frameworks are designed to catch.

Risk can be modelled within known distributions. Deep uncertainty exists when the distribution itself is unstable. Managing risk well must therefore be complemented by periodically challenging the central assumptions that anchor the model.

Quantitative rigour remains vital, but it should sit alongside structured examination of how the operating context may evolve. Uncertainty affects the shape of the future, not just its variance. Resilience depends on questioning the boundaries of the model, not only refining its precision.

Decisions as Load-Bearing Structures

Behind every baseline, contract structure and funding model lies a set of commitments that determine how a programme responds to stress. Some decisions are easily adjusted; others are effectively locked in for years. The difference matters enormously and it is rarely made explicit:

A target cost contract assumes that risk transfer will function within defined commercial behaviours.

An alliancing model assumes sustained collaborative incentives.

A fixed funding envelope assumes cost inflation remains within predictable bounds.

A design standard assumes regulatory stability over a defined period.

A reference class used for benchmarking assumes contextual comparability.

These decisions shape cost trajectories, supplier behaviour and strategic flexibility for years. Yet the assumptions within them are rarely treated as artefacts to be monitored in their own right.

They are implicit in baselines, embedded in cost curves and reflected in risk distributions but seldom revisited explicitly once approval has been granted.

Consider a large transmission infrastructure programme that adopts an alliancing delivery model on the basis that a collaborative incentive structure will drive productivity and reduce interface risk. That assumption is reasonable at the time of contract formation. But if key personnel turn over significantly across multiple alliance partners, the behavioural conditions that made the model viable begin to erode quietly, beneath the surface of the controls data. By the time the effects appear in cost and schedule metrics, the structural commitment is years old and largely irreversible.

When programmes experience serial re-baselining, early contingency exhaustion or governance fatigue around tolerance resets, it is worth examining whether the issue lies not only in execution but in the resilience of the underlying decision logic. If early decisions function as load-bearing structures, they deserve the same ongoing scrutiny as the performance metrics they influence.

What Decision Intelligence Actually Means in Practice

Decision Intelligence is a discipline concerned with improving the quality of consequential decisions, not by adding more modelling, but by being more deliberate about what decisions depend on and how robust those dependencies are.

It is distinct from risk management in a specific way:

Risk management asks "what could go wrong within our model of the future", Decision Intelligence asks "how sound is the model itself, and what happens if its foundations shift?".

In practice, this often begins with identifying load-bearing assumptions, those which, if materially wrong, would alter the strategic viability of the programme, not merely its cost or schedule variance. These are then made explicit, assigned ownership, and subjected to periodic review as part of governance rather than treated as settled facts embedded in an approved baseline.

A practical assumption health review need not be complex. It involves four things:

Naming the assumption clearly.

Identifying the evidence it currently rests on.

Defining what would signal that the assumption is under strain.

Agreeing at what point that signal would trigger a formal review of the decision it underpins.

This can be integrated into existing programme governance without creating parallel bureaucracy but it requires someone to be responsible for the question, and it requires governance forums to be willing to receive uncomfortable signals before they appear in the numbers.

Decision Intelligence also involves distinguishing between "reversible" and "irreversible" commitments, and shaping flexibility accordingly. It encourages structured testing of decisions across a small number of materially different futures. This is not exhaustive scenario planning, but enough to surface where optionality would be most valuable and where early lock-in carries the greatest fragility risk.

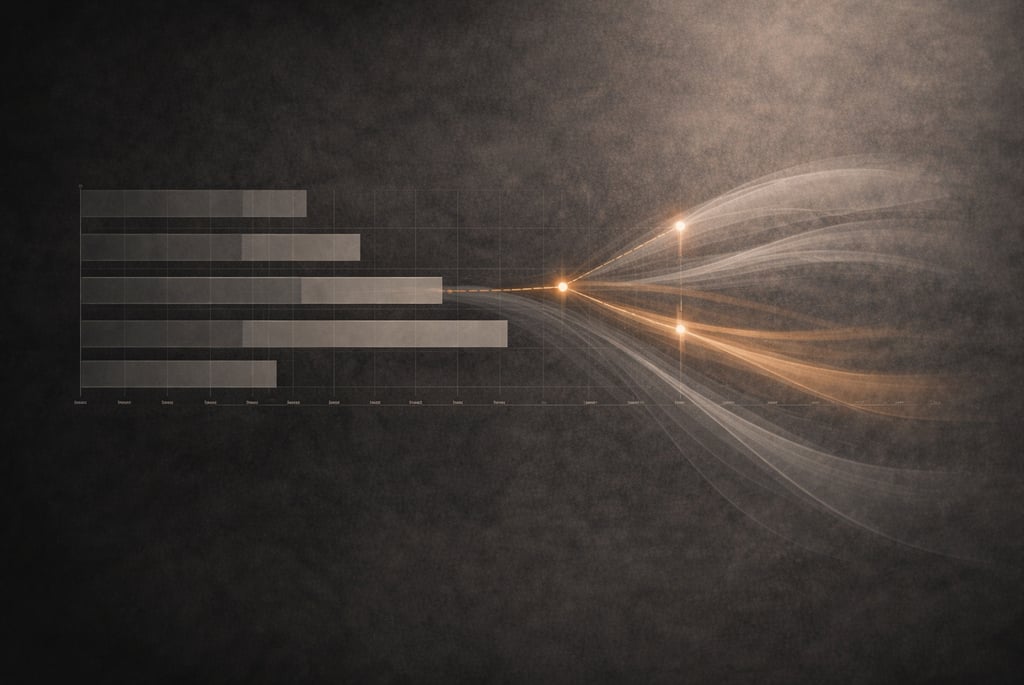

What this produces is not a better risk register. It is a richer strategic picture: one in which the controls data that programmes already generate is connected back to the decisions that shaped it, so that variance is understood in context rather than managed in isolation.

Controls Data as Strategic Intelligence

The most underused asset on a major infrastructure programme is often the controls data itself. Cost trends, contingency drawdown curves, forecast volatility patterns and risk maturity assessments collectively contain information that goes well beyond variance reporting. They are, in effect, a running commentary on the health of the assumptions embedded in the programme's foundations.

When contingency draws down consistently against a particular category of risk, that pattern may indicate not that individual risks are materialising within expected ranges, but that a structural assumption about contractor capacity, productivity or regulatory timelines is under strain. When forecast reliability declines over successive periods, it may indicate not execution weakness but growing misalignment between the decision architecture and the operating environment.

Making this connection explicit by treating controls outputs as signals about decision robustness (not only delivery performance) is where Decision Intelligence adds its most distinctive value to existing project controls practice. It does not replace the disciplines of earned value, quantitative risk analysis or schedule management. It uses their outputs differently: as evidence about the decisions that created them, not only the execution that followed.

This requires a change in how governance conversations are structured. Rather than asking only "are we on track?" the question becomes "what is the data telling us about the assumptions we are relying on?".

That is a harder conversation to have, particularly under delivery pressure. It requires programme leadership to be willing to surface structural concerns before they become visible crises, and governance bodies to engage with strategic signals rather than waiting for threshold breaches.

These are not trivial frictions. Funding profiles are often locked to political cycles. Contractors resist optionality because it complicates their own risk pricing. Programme teams working at pace have limited bandwidth for structured reflection. Decision Intelligence does not dissolve these constraints but it does provide a discipline for navigating them more deliberately, and for ensuring that when adaptation is required, it is better informed and less reactive.

A More Powerful Performance Question

Over the life of a major infrastructure programme, re-baselining can start to feel inevitable. Contingency is drawn down and reallocated. Forecasts move. Governance tolerances are reset. Conversations become increasingly focused on explaining variance rather than examining its structural causes.

This does not reflect incompetence. It reflects the reality that infrastructure is delivered within systems that evolve while we build inside them. But it does reflect an opportunity.

The signals are already there: in the controls data, in the risk trends, in the governance conversations. The question is whether they are being read as evidence about delivery performance alone, or as evidence about the robustness of the decisions that shaped the programme.

Decision Intelligence provides a way of connecting those signals back to the decisions that generated them. It allows project controls data to inform strategic reflection, not just corrective action. And it enables programmes to ask not only whether performance is drifting, but whether the logic underpinning the programme remains fit for the environment it is operating in.

The real performance question is not only whether we are delivering to plan, but whether the decisions shaping that plan were designed with sufficient resilience to absorb change as it comes.

That is where the next step change in infrastructure performance may lie. Not in tighter control alone, but in better-designed decisions and in the organisational courage to keep examining them.

Where to Go Next

Decision Intelligence does not require organisational transformation to begin. It requires curiosity about the decisions already made, honesty about the assumptions they rest on, and the willingness to treat that inquiry as a normal part of governance, not an admission of weakness, but a mark of programme maturity.

To start the conversation, ask yourself: which assumptions is our programme most reliant on right now and when did we last examine them? Are our governance conversations explaining variance, or exploring its origins? If a key assumption shifted materially tomorrow, would we know, and would we have the mechanisms to respond before it appeared in the numbers?

Written with the assistance of generative AI but powered by humans with the knowledge, capability and experience to empower projects to drive better outcomes

At Kaleido, we bring Decision Intelligence to life by empowering leaders and project teams to Anticipate, Evaluate and Respond to uncertainty with confidence. We help bring clarity from complexity by revealing meaningful insights leading to clearer actionable choices that ultimately drive better project outcomes.

Learn more about Deepak Mistry on LinkedIn: https://www.linkedin.com/in/deepak-mistry-74004989/

Follow Kaleido on LinkedIn: https://www.linkedin.com/company/kaleidoprojects/